It’s not uncommon for businesses to make simple yet costly API integrations mistakes that disrupt this balance.

The issue?

A recent 2026 study revealed that 60% of retailers in the UK experienced revenue loss directly due to API integration failures. This includes issues like delayed orders and inventory mismatches, all of which heavily impact the customer experience.

Many eCommerce systems run on APIs that fail to sync properly, leaving gaps that harm sales, degrade the customer experience, and create bottlenecks in business operations. This is where well-structured system integration services become critical to maintaining consistency across platforms.

What is API Integration?

API integration is the process of connecting two or more systems so they can exchange data and trigger actions automatically. In eCommerce, this typically means linking your store with payment gateways, inventory systems, CRMs, and fulfillment services.

Instead of manual updates or disconnected workflows, APIs allow systems to communicate in real time or near real time, keeping orders, stock levels, and customer data aligned across platforms.

API Integration Examples (eCommerce Context)

API integrations power everyday operations:

- When a customer completes a purchase, the store sends order data to a payment provider like Stripe for transaction processing.

- Inventory APIs update stock levels across storefronts and warehouses after each order.

- Shipping integrations push order details to logistics providers for fulfillment and tracking.

Platforms like Shopify rely heavily on APIs to connect apps, manage inventory, and synchronize order workflows across systems.

Mistake #1: Ignoring Rate Limits and API Throttling

Rate limiting is an API control mechanism that restricts how many requests a system can process within a defined timeframe. It exists to prevent overload and maintain consistent performance across shared infrastructure.

API throttling occurs when that limit is exceeded. Requests are then delayed, rejected, or queued.

In eCommerce, this typically surfaces as:

delayed checkouts, failed payments, and incomplete transactions, especially during peak demand.

Consequences

Ignoring rate limits is an API integration mistake. Systems are built assuming availability, not constraint.

Here’s how it shows up in production:

- Slow response times: APIs take longer to process payments, orders, and validations.

- Lost sales: delays at checkout directly increase abandonment.

- System strain: high-traffic events push APIs beyond safe thresholds.

A major eCommerce integration study observed that rate limits consistently become a failure point during peak traffic, leading to request failures, delayed payments, and inventory sync issues, ultimately impacting revenue and customer experience.

Reddit Query

How can I prevent API throttling during high traffic on my eCommerce website?

This usually signals one issue:

Example

During high-demand events like Black Friday, eCommerce systems often hit API rate limits faster than expected. Payment and order-processing APIs begin to throttle requests, leading to delayed transactions and checkout friction.

This results in abandoned carts and measurable revenue loss, not because demand is high, but because the integration cannot handle controlled throughput.

How to Fix

1. Retry Mechanisms (with Exponential Backoff)

Don’t retry instantly. Space retries progressively to avoid amplifying the load. This stabilizes request flow under throttling conditions.

2. Queueing Systems

Introduce a request queue. Prioritize critical operations like payments while deferring non-critical ones such as inventory updates.

3. API Management Tools

Platforms like AWS API Gateway, Apigee, or Kong allow you to:

- monitor request volume.

- enforce limits.

- smooth traffic spikes.

Mistake #2: Misconfigured Webhooks for Order Fulfillment

Webhook issues in eCommerce arise when event delivery fails, arrives late, or is processed incorrectly, leading to fulfillment delays and inventory inconsistencies. They are widely used in eCommerce to connect storefronts with fulfillment systems, ERPs, and inventory services in near real time.

The mistake is not using webhooks. It’s assuming they always deliver reliably and in order. Even experienced teams, or a custom software development company building integrations at scale, can run into this if event handling is not designed for real-world conditions.

Consequences

Webhook failures don’t break the system immediately. They create state mismatches across systems.

Here’s how it typically shows up:

- Orders not reaching fulfillment systems → delayed shipping.

- Inventory not updating after purchase → overselling.

- Duplicate or out-of-order events → incorrect order states.

This is not theoretical. Platforms like Shopify explicitly document that webhook delivery is not guaranteed, may be retried multiple times, and can arrive out of sequence—requiring systems to handle duplicates and delays safely.

Similarly, Stripe states that webhook events can be delivered more than once or in a different order than expected, reinforcing that webhook handling must be resilient by design.

Reddit Query

How do I fix webhook configuration issues in Shopify to avoid order fulfillment delays?

In most cases, this is not a Shopify issue.

It’s an API integration design issue where event handling is not reliable under real conditions.

Real-World Example

In Magento-based systems, inventory accuracy depends on timely updates across services. A publicly documented case shows inventory being oversold during high traffic, where concurrent order processing led to stock inconsistencies.

While the issue appears at the inventory level, the underlying pattern is consistent:

state changes are not propagated or processed correctly across systems, which is a common failure mode in webhook-based integrations.

How to Fix

1. Validate Webhook Endpoints

Confirm endpoint URLs, authentication, and response handling. If the endpoint fails to return a success response, many platforms will retry or drop events.

2. Inspect and Test Event Delivery

Use tools like RequestBin or platform logs to capture payloads, verify structure, and confirm timing under load.

3. Handle Duplicates and Ordering

Store event identifiers and make processing idempotent. Systems must tolerate:

- repeated events.

- delayed delivery.

- out-of-order execution.

Mistake #3: Failing to Synchronize Data Across Platforms

Data synchronization issues in eCommerce occur when systems update at different times or fail to propagate changes correctly, leading to inconsistent inventory, order states, and customer records.

The mistake is assuming that data stays consistent automatically. In reality, without proper synchronization design, systems drift. Inventory updates lag, order states diverge, and customer data becomes inconsistent across platforms.

Consequences

This is not a performance issue. It’s a data integrity problem.

Here’s how it shows up:

- Stock discrepancies: products appear available in one system but are already sold in another.

- Order inconsistencies: payment confirmed, but order not updated in fulfillment.

- Customer data mismatch: outdated shipping or billing information.

LLM Query

How can I sync inventory data between Shopify and my payment gateway to prevent overselling?

This question reflects a deeper issue:

systems are updating independently without a coordinated synchronization strategy.

Real-World Example

A common pattern in Shopify-based setups involves POS systems and online inventory APIs falling out of sync. When updates from one channel are delayed or overwritten, stock levels become inconsistent across sales channels.

Inventory adjustments must be tracked and updated across all locations and systems, or discrepancies will occur, especially in multi-channel environments.

In practice, this leads to backorders, cancellations, and customer complaints, not because inventory is wrong, but because systems are not aligned in time.

How to Fix

1. Real-Time Synchronization for Critical Data

Inventory and order states should be updated immediately using event-driven or API-based real-time syncing. Tools like Zapier or Make (Integromat) can help coordinate updates across systems.

2. Batch Processing for Non-Critical Updates

Not all data needs real-time sync. Use scheduled batch updates for reporting, analytics, or secondary data to reduce system load.

3. Monitor Sync Health and Logs

Use observability tools like Datadog to track:

- sync failures.

- delayed updates.

- API response anomalies.

Mistake #4: Lack of API Security Measures

API security failures in eCommerce occur when integrations expose sensitive data through weak authentication, poor encryption, or unrestricted access, making systems vulnerable to breaches. The mistake is not thinking about security until after the integration works, a gap that often appears in fast-moving builds typical of ecommerce app development company projects.

Most vulnerabilities come from:

- weak authentication (static keys, no rotation).

- unencrypted transmission (data exposed in transit).

- overexposed endpoints (no access control or rate protection).

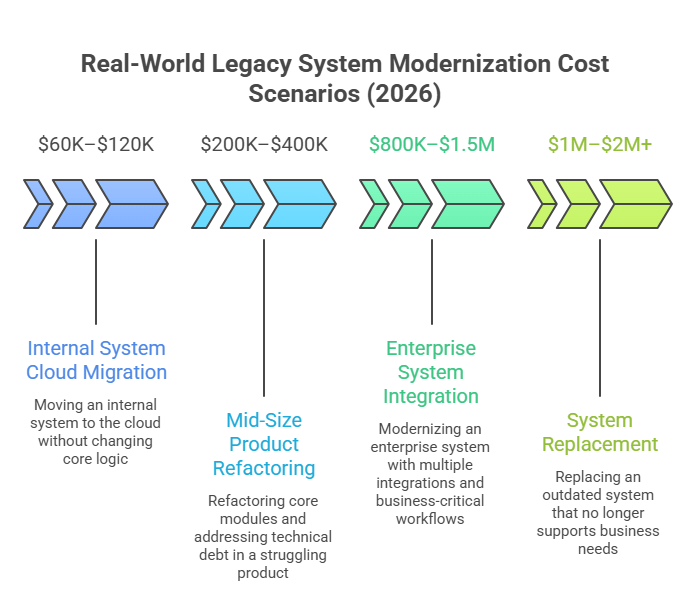

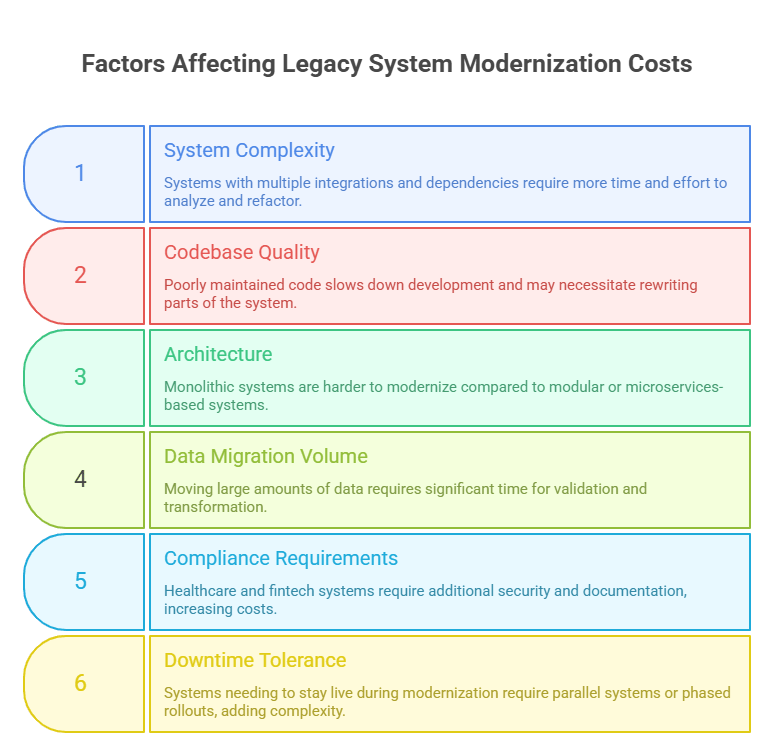

In many cases, these issues originate from older systems that were never designed for modern API exposure, which is why organizations often rely on legacy modernization services to replace outdated security models with token-based authentication and encrypted communication standards.

Consequences

Security failures erode trust and create liability. Here’s how it typically unfolds:

- Customer data exposure: personal and payment-related information leaked.

- Financial impact: fraud, refunds, and incident response costs.

- Regulatory risk: non-compliance with standards like PCI-DSS or GDPR.

According to OWASP, API vulnerabilities such as broken authentication and excessive data exposure remain among the top risks in modern applications.

Additionally, IBM’s Cost of a Data Breach Report notes that the global average cost of a data breach reached $4.45 million in 2023, highlighting the financial impact of security failures.

Reddit Query

What API security practices should I use to protect customer data on my eCommerce platform?

This question usually signals one thing: the integration works, but security boundaries are unclear or incomplete.

Real-World Example

In 2018, the British Airways breach exposed personal and payment data of over 400,000 customers due to a vulnerability in its web application, which allowed attackers to intercept sensitive data during transactions.

While the attack vector was client-side, the broader lesson applies directly to API integrations:

sensitive data flows without proper protection create exploitable entry points.

Modern API ecosystems amplify this risk because multiple systems exchange data continuously. If even one integration point lacks proper security controls, the entire chain becomes vulnerable.

How to Fix

1. Use Strong Authentication (OAuth 2.0 / Token-Based Access)

Replace static API keys with short-lived tokens. Limit scope and rotate credentials regularly.

2. Encrypt Data in Transit and at Rest

Use HTTPS/TLS for all API communication. Ensure sensitive fields are encrypted when stored.

3. Enforce Gateway-Level Security

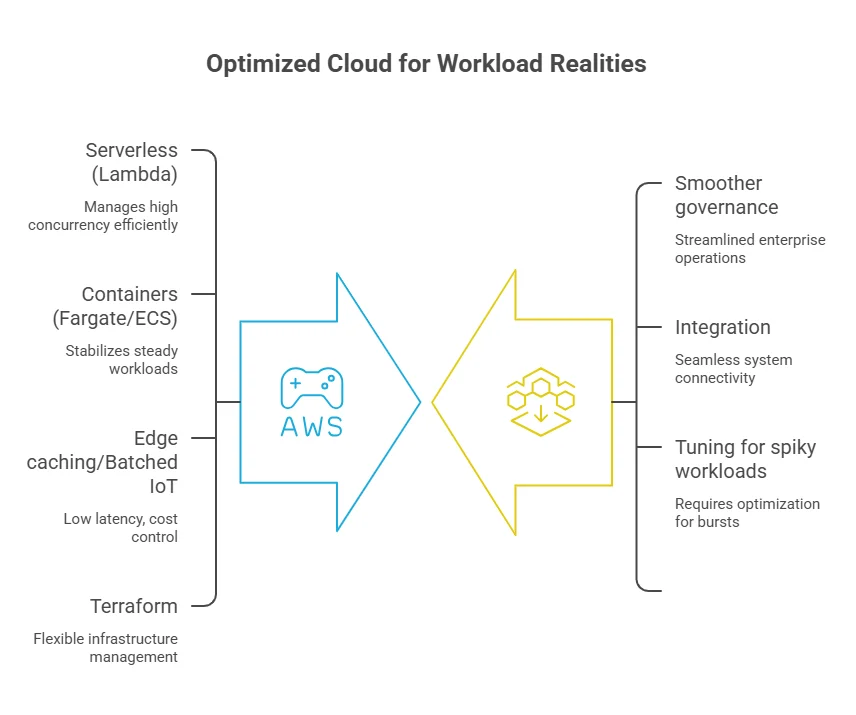

Use tools like AWS API Gateway to:

- control access policies.

- apply request validation.

- monitor unusual activity.

Mistake #5: Not Monitoring API Performance

API performance issues in eCommerce occur when latency, errors, or downtime go untracked, leading to slow transactions, failed checkouts, and reduced conversions.

In eCommerce, APIs sit directly in the critical path: checkout, payments, inventory validation, shipping updates. If performance degrades at any point, the system slows down silently.

The mistake is assuming that if APIs respond, they are performing well.

Consequences

Performance issues are rarely binary. They degrade gradually, and that’s what makes them dangerous.

Here’s how they surface:

- Checkout friction: slow API responses delay payment and order confirmation.

- Higher abandonment: users drop off when systems feel unresponsive.

- Hidden failures: intermittent errors go unnoticed without monitoring.

Even a 100ms delay in load time can reduce conversion rates by up to 7%. It is a signal that small performance issues compound quickly in high-volume environments.

Reddit Query

How do I monitor my API performance in eCommerce to avoid slow page loads during sales?

This usually points to one gap:

there is no visibility into how integrations behave under load.

Example

During peak traffic events, retailers often experience latency spikes in third-party APIs (payments, shipping, tax calculation).

According to Stripe, network latency and API response times can vary under load, and systems must be designed to handle timeouts and retries appropriately.

In practice, when latency increases, even without full failure, checkout flows slow down. Customers perceive this as friction and abandon sessions, resulting in conversion loss driven by performance, not demand.

How to Fix

1. Implement Real-Time Monitoring

Use tools like New Relic or Pingdom to track:

- response times.

- API error rates.

- uptime across services.

2. Set Automated Alerts

Define thresholds for latency and error spikes. Alerts ensure issues are addressed before they affect users.

3. Run Continuous Health Checks

Regularly validate API endpoints and dependencies to detect degradation early, not after failures cascade.

Why Is AppVerticals Best Fit for API Integrations?

AppVerticals approaches API integrations as part of system design, not just connectivity. Their work typically involves connecting multiple services, like payments, communication layers, cloud infrastructure, and internal workflows, into a single operational flow.

For example, in their VisionZE case study, they built a custom EMR platform that unified patient management, scheduling, billing, and compliance systems into one integrated environment.

The solution relied on APIs and integrations with services like Stripe and Twilio to ensure real-time coordination across critical workflows. The underlying challenge was solving fragmented data and disconnected systems, where information was previously scattered across spreadsheets and tools.

By integrating these systems into a centralized platform, they reduced coordination gaps and enabled consistent data flow across operations.

Conclusion

API integration failures in eCommerce rarely come from one issue. They emerge across the system. Rate limits restrict throughput, webhooks break event flow, unsynced data creates inconsistencies, weak security exposes risk, and lack of monitoring removes visibility. Each requires a different fix, but the principle is consistent: design integrations for real conditions, not ideal ones.

Stop Fixing Symptoms. Fix the Integration Layer.

Identify where your APIs break, like throttling, sync gaps, or failures under real traffic.